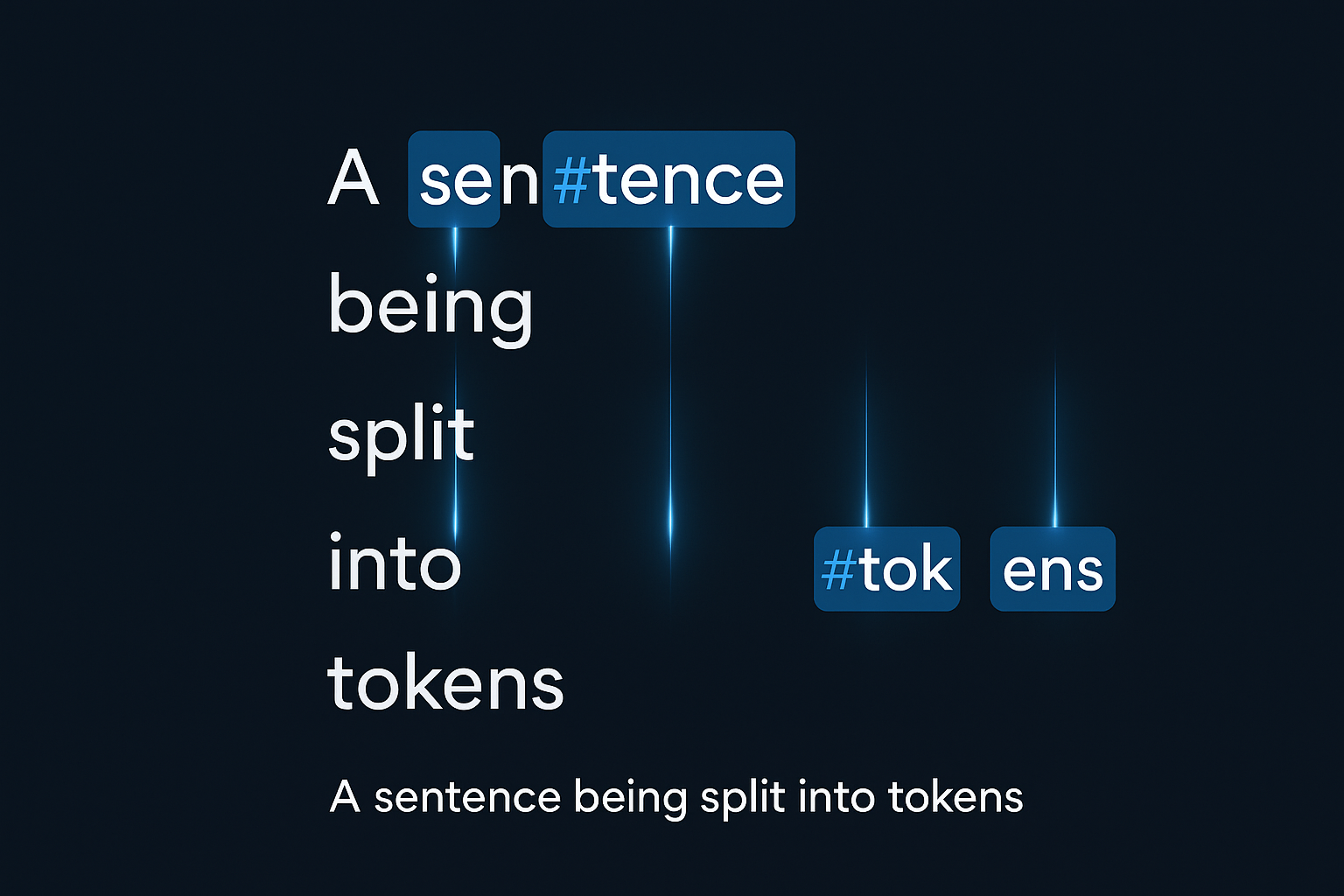

ML models can’t read text - they only understand numbers. So how do we convert “Hello world!” into something a neural network can process? That’s tokenization. It seems simple, but there’s a lot of nuance.

The fundamental question

How do you split "Don't tokenize carelessly!"?

You have several options:

- Character level:

["D", "o", "n", "'", "t", " ", "t", ...] - Word level:

["Don't", "tokenize", "carelessly", "!"] - Subword:

["Don", "'t", "token", "ize", "care", "less", "ly", "!"]

Each approach has tradeoffs. Modern models mostly use subword tokenization - the best of both worlds.

Interactive demo: Tokenization Animation - see how the same text gets split differently.

Character-level tokenization

Every single character becomes its own token.

The good:

- Tiny vocabulary (just letters + punctuation)

- Never see “unknown” words

- Works for any language, even made-up words

The bad:

- Sequences get VERY long (“hello” = 5 tokens)

- Harder for the model to learn word-level meanings

- More computation needed

Rarely used alone nowadays, but some models use it as a fallback.

Word-level tokenization

Split on whitespace and punctuation. The intuitive approach.

text = "Hello, world!"

tokens = text.split() # ["Hello,", "world!"]

# or with regex

import re

tokens = re.findall(r'\w+|[^\w\s]', text) # ["Hello", ",", "world", "!"]

Pros:

- Intuitive

- Short sequences

- Each token meaningful

Cons:

- Huge vocabulary (every word form)

- OOV problems (“unbelievable” not in vocab?)

- Morphology issues (run, runs, running = 3 tokens)

Subword tokenization

The sweet spot. Break unknown words into known pieces.

“unhappiness” → [“un”, “happi”, “ness”]

Model can understand new words from known components.

BPE - Byte Pair Encoding

Start with characters. Repeatedly merge most frequent pairs.

Corpus: "low low low lower lowest"

Initial: l o w </w> l o w </w> l o w </w> l o w e r </w> l o w e s t </w>

Iteration 1: merge "l o" → "lo"

Iteration 2: merge "lo w" → "low"

... continue until vocabulary size reached

GPT-2/3/4 use BPE.

WordPiece

Similar to BPE but uses likelihood instead of frequency.

BERT uses WordPiece. Tokens start with ## if not word start.

“unhappiness” → [“un”, “##happi”, “##ness”]

SentencePiece

Treats spaces as characters (▁). Language agnostic.

“hello world” → [“▁hello”, “▁world”]

Used by T5, LLaMA.

In practice

Using transformers library:

from transformers import AutoTokenizer

# BERT (WordPiece)

tokenizer = AutoTokenizer.from_pretrained('bert-base-uncased')

tokens = tokenizer.tokenize("unhappiness")

# ['un', '##happiness']

# GPT-2 (BPE)

tokenizer = AutoTokenizer.from_pretrained('gpt2')

tokens = tokenizer.tokenize("unhappiness")

# ['un', 'happiness']

# T5 (SentencePiece)

tokenizer = AutoTokenizer.from_pretrained('t5-base')

tokens = tokenizer.tokenize("unhappiness")

# ['▁un', 'happiness']

Vocabulary size

Bigger vocab:

- Shorter sequences

- Each token more meaningful

- More parameters in embedding layer

Smaller vocab:

- Longer sequences

- Better generalization

- Less memory

Common sizes:

- BERT: 30,000

- GPT-2: 50,257

- LLaMA: 32,000

Tokenization is clearer now? Show some love by starring ML Animations and tweeting about it!