Pearson correlation measures linear relationships. Spearman measures monotonic ones. If X goes up, does Y tend to go up? Works even for non-linear relationships.

Definition

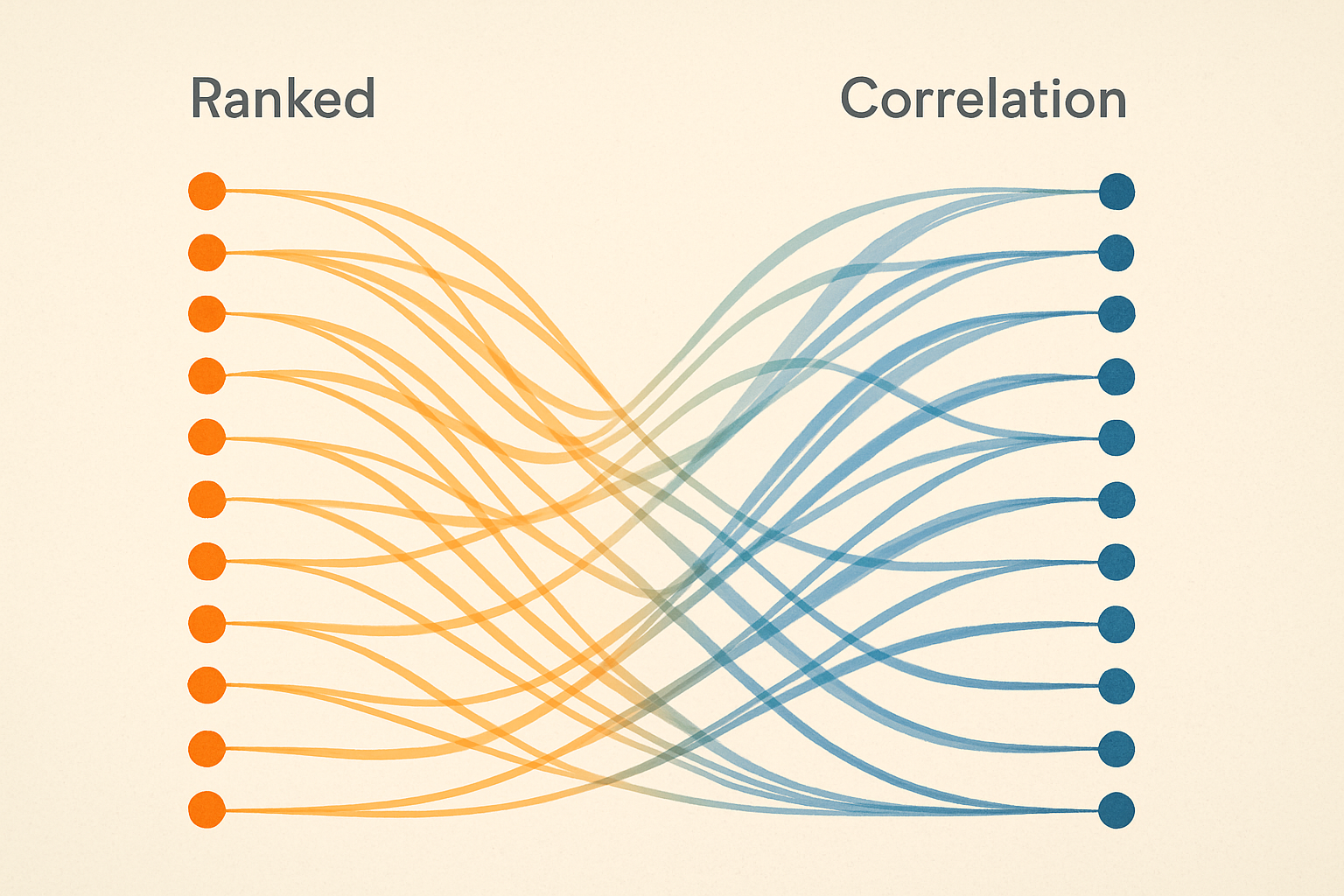

Spearman correlation = Pearson correlation on ranks.

- Convert values to ranks

- Compute Pearson correlation on ranks

$$\rho = 1 - \frac{6\sum d_i^2}{n(n^2-1)}$$

Where $d_i$ = difference between ranks.

Interactive demo: Spearman Correlation Animation

Example

| X | Y | Rank(X) | Rank(Y) | d | d² |

|---|---|---|---|---|---|

| 10 | 100 | 1 | 1 | 0 | 0 |

| 20 | 80 | 2 | 3 | -1 | 1 |

| 30 | 90 | 3 | 2 | 1 | 1 |

| 40 | 50 | 4 | 4 | 0 | 0 |

$$\rho = 1 - \frac{6 \times 2}{4 \times 15} = 1 - 0.2 = 0.8$$

Strong positive rank correlation.

Computing in Python

from scipy.stats import spearmanr

# Basic usage

correlation, p_value = spearmanr(X, Y)

# With pandas

df['A'].corr(df['B'], method='spearman')

# Multiple variables at once

correlation_matrix = df.corr(method='spearman')

Spearman vs Pearson

Pearson: Linear relationship only

- Measures: how close to a line?

- Sensitive to outliers

- Assumes normality

Spearman: Monotonic relationship

- Measures: consistent ordering?

- Robust to outliers

- No distribution assumption

import numpy as np

from scipy.stats import pearsonr, spearmanr

# Perfect monotonic but non-linear

X = np.array([1, 2, 3, 4, 5])

Y = np.array([1, 4, 9, 16, 25]) # X squared

pearsonr(X, Y) # 0.98 (not quite 1)

spearmanr(X, Y) # 1.0 (perfect monotonic)

When to use Spearman

- Ordinal data (ranks, ratings)

- Non-linear but monotonic relationships

- Presence of outliers

- Distribution unknown or non-normal

- Ranking evaluation (ML models)

In ML evaluation

Spearman useful for evaluating ranking quality:

# Compare predicted rankings to true rankings

from scipy.stats import spearmanr

predicted_scores = model.predict(X)

true_relevance = y_test

correlation, _ = spearmanr(predicted_scores, true_relevance)

If your model ranks items correctly even if scores are off, Spearman will be high.

Handling ties

When values are equal, average their ranks:

Values: [10, 20, 20, 30] Ranks: [1, 2.5, 2.5, 4]

scipy handles this automatically.

from scipy.stats import rankdata

ranks = rankdata([10, 20, 20, 30]) # [1, 2.5, 2.5, 4]

Significance testing

Is the correlation significantly different from 0?

correlation, p_value = spearmanr(X, Y)

if p_value < 0.05:

print(f"Significant correlation: {correlation:.2f}")

Small p-value → unlikely to see this correlation by chance.

Confidence intervals

Bootstrap for confidence intervals:

from scipy.stats import bootstrap

def spearman_stat(x, y, axis):

return spearmanr(x, y)[0]

result = bootstrap(

(X, Y),

spearman_stat,

n_resamples=1000,

paired=True

)

ci = result.confidence_interval

Correlation matrix

import seaborn as sns

# Spearman correlation matrix

corr_matrix = df.corr(method='spearman')

# Visualize

sns.heatmap(corr_matrix, annot=True, cmap='coolwarm')

Did this make correlation intuitive? Give ML Animations a star ⭐ and share with fellow data scientists!