Learn the value of actions without knowing environment dynamics. Q-learning: simple, powerful, the foundation of DQN and modern deep RL.

The Q-function

Q(s, a) = expected return starting from state s, taking action a, then acting optimally.

If we know Q*, we know optimal policy: $$\pi^(s) = \arg\max_a Q^(s, a)$$

Just pick the action with highest Q-value.

The update rule

$$Q(s, a) \leftarrow Q(s, a) + \alpha \left[ r + \gamma \max_{a’} Q(s’, a’) - Q(s, a) \right]$$

Move Q toward observed return.

$r + \gamma \max_{a’} Q(s’, a’)$ is the target: immediate reward plus best future.

$\alpha$ is learning rate.

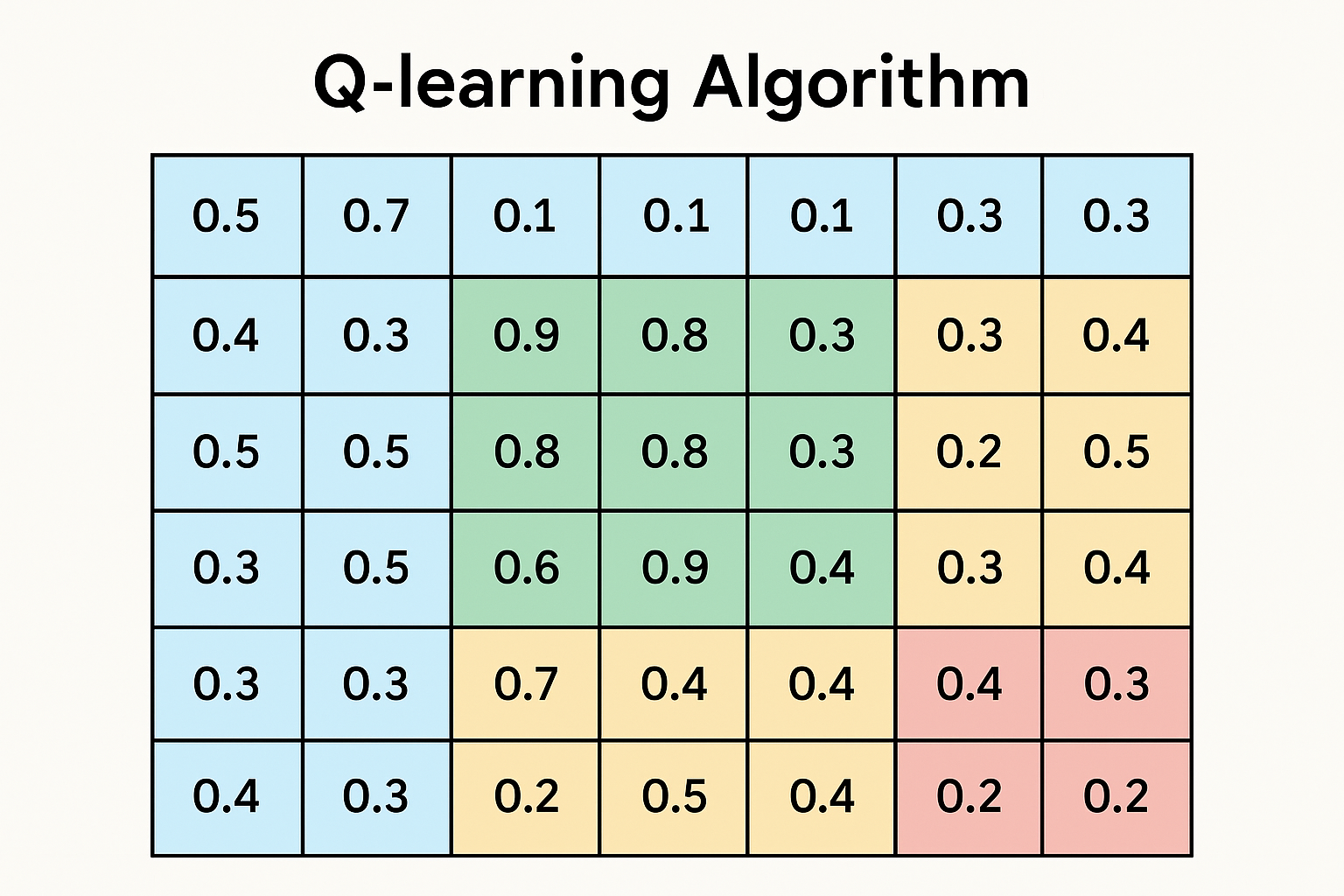

Interactive demo: Q-Learning Animation

Why it works

This is temporal difference learning. Instead of waiting for episode end:

- Observe one transition

- Update Q using estimated future value

- Repeat

Off-policy: learns optimal Q regardless of what policy generated the data.

Algorithm

def q_learning(env, num_episodes, alpha=0.1, gamma=0.99, epsilon=0.1):

Q = defaultdict(lambda: np.zeros(env.action_space.n))

for episode in range(num_episodes):

state = env.reset()

done = False

while not done:

# Epsilon-greedy action selection

if np.random.random() < epsilon:

action = env.action_space.sample()

else:

action = np.argmax(Q[state])

next_state, reward, done, _ = env.step(action)

# Q-learning update

best_next = np.max(Q[next_state]) if not done else 0

td_target = reward + gamma * best_next

Q[state][action] += alpha * (td_target - Q[state][action])

state = next_state

return Q

Key components

Epsilon-greedy

Balance exploration and exploitation:

- With probability ε: random action (explore)

- With probability 1-ε: best action (exploit)

Common: start ε high, decay over time.

Learning rate

Too high: unstable, oscillates Too low: learns slowly

Often decayed: $\alpha_t = 1/(1 + t)$

Discount factor

γ close to 1: values long-term rewards γ close to 0: myopic, immediate rewards only

Example: Cliff Walking

S . . . . . . . . G

C C C C C C C C C C

S = start, G = goal, C = cliff (big negative reward)

Q-learning finds optimal path: hug the cliff for shortest route.

SARSA (on-policy) learns safer path: stay away from cliff.

Deep Q-Networks (DQN)

Q-table doesn’t scale. Use neural network:

$$Q(s, a; \theta) \approx Q^*(s, a)$$

Challenges:

- Correlated samples → experience replay

- Moving target → target network

class DQN(nn.Module):

def __init__(self, state_dim, action_dim):

super().__init__()

self.net = nn.Sequential(

nn.Linear(state_dim, 128),

nn.ReLU(),

nn.Linear(128, 128),

nn.ReLU(),

nn.Linear(128, action_dim)

)

def forward(self, state):

return self.net(state) # Q-values for all actions

Limitations

- Overestimates Q-values (max over noise)

- Struggles with large/continuous action spaces

- Sample inefficient

Fixes: Double DQN, Dueling DQN, Rainbow…

Q-learning makes sense now? Star ML Animations and share this with your reinforcement learning community!