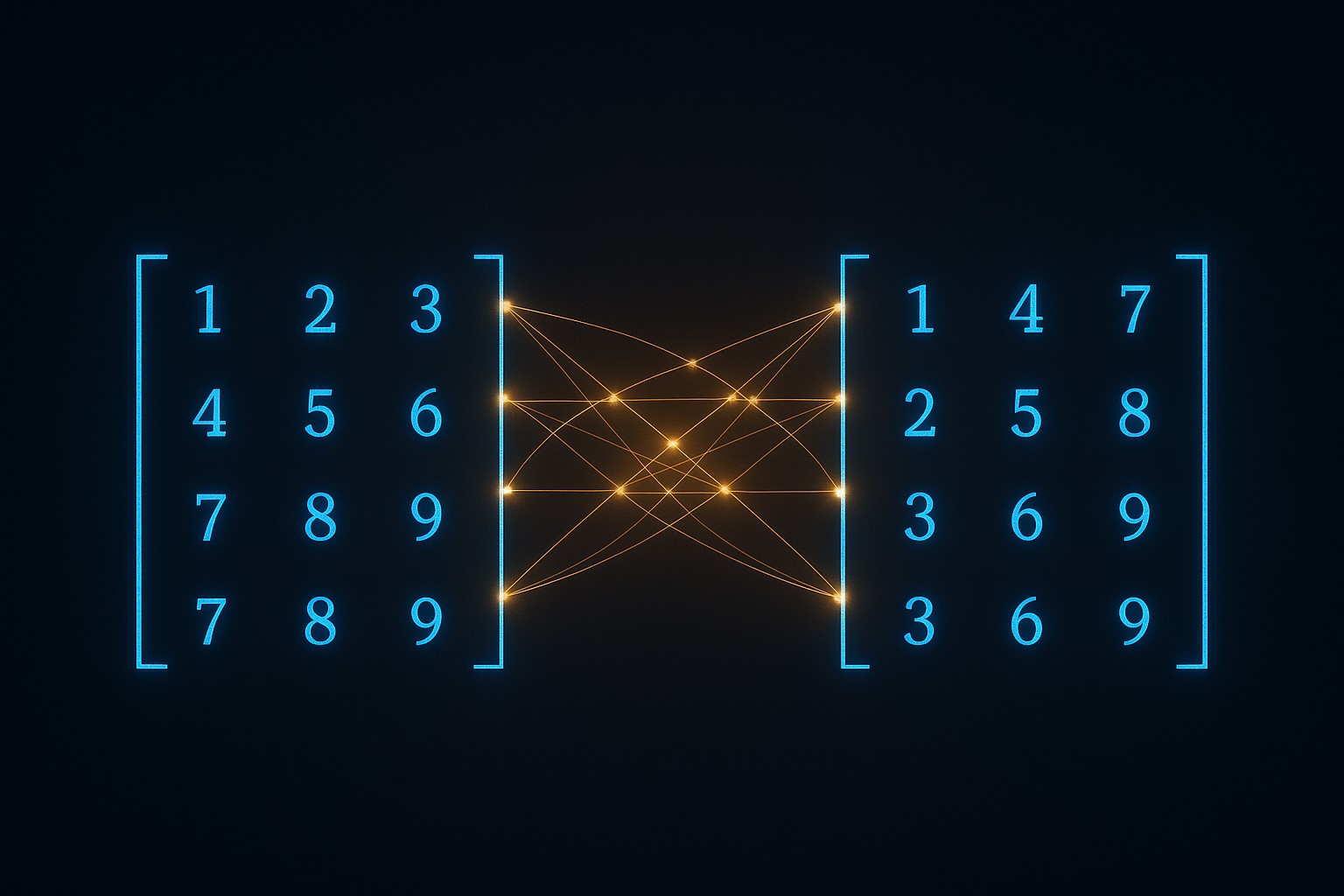

Neural networks are mostly matrix multiplication. Understanding this operation deeply helps understand everything else.

The basic operation

Matrix A (m×n) times matrix B (n×p) gives matrix C (m×p).

Each element C[i,j] is dot product of row i from A and column j from B.

$$C_{ij} = \sum_{k=1}^{n} A_{ik} \cdot B_{kj}$$

# Manual implementation

def matmul(A, B):

m, n = A.shape

n, p = B.shape

C = np.zeros((m, p))

for i in range(m):

for j in range(p):

for k in range(n):

C[i,j] += A[i,k] * B[k,j]

return C

Never use this - O(n³) and slow. Use numpy/torch.

Interactive demo: Matrix Multiplication Animation

Dimension rules

Inner dimensions must match: (m × n) × (n × p) = (m × p)

If they don’t match, multiplication is undefined.

A = np.random.randn(3, 4) # 3×4

B = np.random.randn(4, 2) # 4×2

C = A @ B # 3×2 ✓

B = np.random.randn(5, 2) # 5×2

C = A @ B # Error! 4 ≠ 5

In neural networks

Linear layer: y = Wx + b

# Batch of inputs: x is (batch_size, input_dim)

# Weights: W is (input_dim, output_dim)

# Output: y is (batch_size, output_dim)

x = torch.randn(32, 100) # 32 samples, 100 features

W = torch.randn(100, 50) # project to 50 dims

y = x @ W # 32×50 output

Each sample gets its own output through same weights.

Why it’s associative

(AB)C = A(BC)

Order of multiplication matters. Choose wisely.

# A is 1000×10, B is 10×1000, C is 1000×1

# (AB)C: (1000×10)(10×1000) = 1000×1000, then (1000×1000)(1000×1) = expensive!

# A(BC): (10×1000)(1000×1) = 10×1, then (1000×10)(10×1) = cheap!

Same result, vastly different compute.

Transpose properties

$(AB)^T = B^T A^T$

Order reverses when transposing product.

(A @ B).T == B.T @ A.T # True (up to floating point)

Batched matrix multiplication

Modern ML uses batched operations:

# A is (batch, m, n)

# B is (batch, n, p)

# Result is (batch, m, p)

A = torch.randn(64, 10, 20)

B = torch.randn(64, 20, 30)

C = torch.bmm(A, B) # batch matrix multiply

# C is (64, 10, 30)

Each batch element multiplied independently.

Einsum - flexible notation

Einstein summation notation handles complex cases:

# Regular matmul

C = torch.einsum('ij,jk->ik', A, B)

# Batched matmul

C = torch.einsum('bij,bjk->bik', A, B)

# Dot product

c = torch.einsum('i,i->', a, b)

# Outer product

C = torch.einsum('i,j->ij', a, b)

Attention is matmul

Self-attention core operation:

$$\text{Attention}(Q, K, V) = \text{softmax}\left(\frac{QK^T}{\sqrt{d_k}}\right)V$$

Two matrix multiplications: Q @ K.T and result @ V

scores = (Q @ K.transpose(-2, -1)) / math.sqrt(d_k) # matmul

attn = F.softmax(scores, dim=-1)

output = attn @ V # matmul

GPU efficiency

GPUs are optimized for matrix multiplication. Key to making it fast:

- Memory alignment: Matrices should be contiguous

- Batch operations: More parallelism

- Appropriate sizes: Powers of 2 often faster

Matrix operations finally make sense? Help others by starring ML Animations and sharing this post!