“The future depends only on the present, not on how we got here.”

This is the Markov property. Surprisingly powerful for modeling sequences, from weather to text to molecules.

Definition

A sequence of states where:

$$P(X_{t+1} | X_t, X_{t-1}, …, X_0) = P(X_{t+1} | X_t)$$

History doesn’t matter. Only current state matters.

Transition matrix

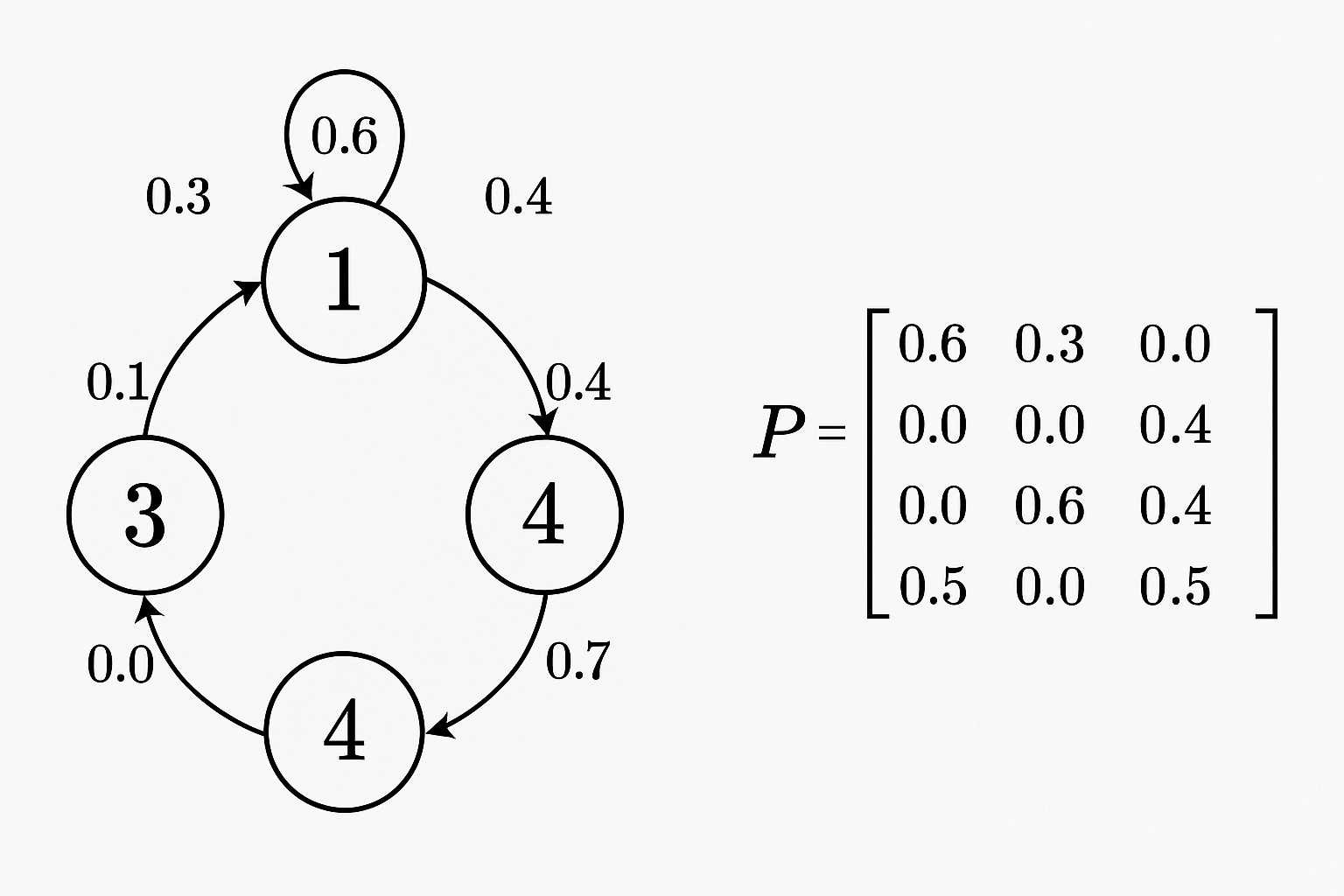

Define P[i,j] = probability of going from state i to state j.

Example (weather):

Sunny Rainy

Sunny 0.8 0.2

Rainy 0.4 0.6

If sunny today, 80% chance sunny tomorrow.

Interactive demo: Markov Chain Animation

Computing future states

If $\pi_t$ is distribution over states at time t:

$$\pi_{t+1} = \pi_t \cdot P$$

After n steps: $$\pi_n = \pi_0 \cdot P^n$$

import numpy as np

P = np.array([[0.8, 0.2],

[0.4, 0.6]])

# Start sunny

pi = np.array([1, 0])

# After 5 steps

for _ in range(5):

pi = pi @ P

print(pi) # [0.667, 0.333]

Stationary distribution

As n → ∞, $\pi_n$ converges to stationary distribution $\pi^*$:

$$\pi^* = \pi^* \cdot P$$

The distribution that doesn’t change after transitions.

For our weather example: [0.667, 0.333] Long-run: 2/3 sunny, 1/3 rainy (regardless of starting state).

Finding it: solve $\pi P = \pi$ with $\sum_i \pi_i = 1$

Applications

Text generation

States = words. Transitions = what word follows what.

# Build from corpus

transitions = defaultdict(Counter)

for i in range(len(words) - 1):

transitions[words[i]][words[i+1]] += 1

# Generate text

def generate(start, length):

current = start

result = [current]

for _ in range(length):

next_words = transitions[current]

current = random.choices(

list(next_words.keys()),

list(next_words.values())

)[0]

result.append(current)

return ' '.join(result)

Simple but captures some structure. Early language models.

MCMC (Markov Chain Monte Carlo)

Sample from complex distributions by constructing Markov chain whose stationary distribution is your target.

Metropolis-Hastings, Gibbs sampling - fundamental in Bayesian inference.

Hidden Markov Models

States are hidden. We only see emissions from states.

Used for:

- Speech recognition (states = phonemes, emissions = audio)

- Part-of-speech tagging (states = POS tags, emissions = words)

- Biological sequences

Limitations

First-order Markov: only one step of history. Often too restrictive.

Fixes:

- Higher-order Markov (last n states)

- Hidden Markov Models (hidden states capture history)

- Replace with RNN/Transformer (learned representations)

Markov chains visualized! If this helped, star ML Animations and share with your probability-loving friends!