Word2Vec uses local context windows. GloVe takes a different approach - use global word co-occurrence statistics. Came from Stanford in 2014.

Word2Vec vs GloVe

Word2Vec: trains on (center, context) word pairs, processes corpus as stream GloVe: first builds co-occurrence matrix, then factorizes it

Different philosophy but results are similar. Sometimes GloVe works better, sometimes Word2Vec.

Interactive demo: GloVe Animation

The co-occurrence matrix

Count how often words appear together in a window.

cat dog sat mat

cat - 5 3 2

dog 5 - 1 1

sat 3 1 - 4

mat 2 1 4 -

X[i,j] = how many times word i appears near word j

This matrix is huge. Vocabulary of 400K words = 160 billion entries. But it’s very sparse.

The objective

GloVe’s insight: word vectors should encode the ratio of co-occurrence probabilities.

For words i and j: $$w_i \cdot w_j + b_i + b_j = \log(X_{ij})$$

The loss function:

$$J = \sum_{i,j=1}^{V} f(X_{ij})(w_i^T \tilde{w}_j + b_i + \tilde{b}j - \log X{ij})^2$$

Where f(x) is a weighting function that:

- Downweights very frequent pairs (they dominate otherwise)

- Handles X[i,j] = 0 cases

Building co-occurrence matrix

import numpy as np

from collections import defaultdict

def build_cooccurrence(corpus, vocab, window=5):

cooccurrence = defaultdict(float)

for sentence in corpus:

for i, center in enumerate(sentence):

for j in range(max(0, i-window), min(len(sentence), i+window+1)):

if i != j:

context = sentence[j]

distance = abs(i - j)

# weight by distance (closer = more weight)

cooccurrence[(center, context)] += 1.0 / distance

return cooccurrence

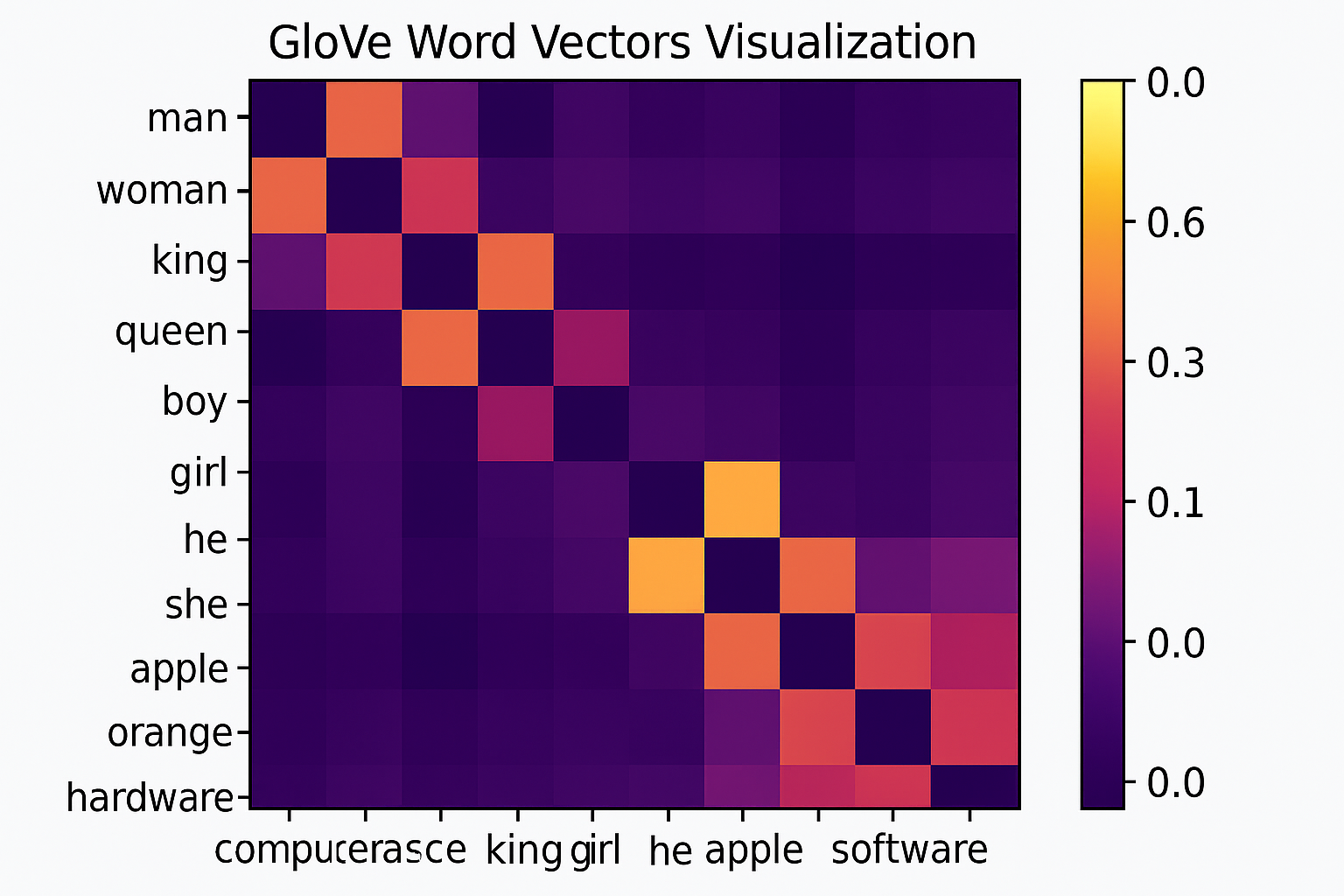

Pretrained vectors

Stanford provides pretrained GloVe vectors:

- Wikipedia + Gigaword: 6B tokens

- Common Crawl: 42B and 840B tokens

- Twitter: 27B tokens (captures informal language)

Dimensions: 50, 100, 200, 300

# loading pretrained

def load_glove(path):

embeddings = {}

with open(path, encoding='utf-8') as f:

for line in f:

values = line.split()

word = values[0]

vector = np.array(values[1:], dtype='float32')

embeddings[word] = vector

return embeddings

When to use GloVe vs Word2Vec

GloVe:

- When you have fixed corpus

- Want reproducible results (deterministic given matrix)

- Global statistics matter for your task

Word2Vec:

- Streaming data / can’t fit all at once

- Need incremental updates

- Smaller corpora (GloVe needs lots of data)

GloVe vectors make sense now? Star the ML Animations repo and share this article with your NLP colleagues!