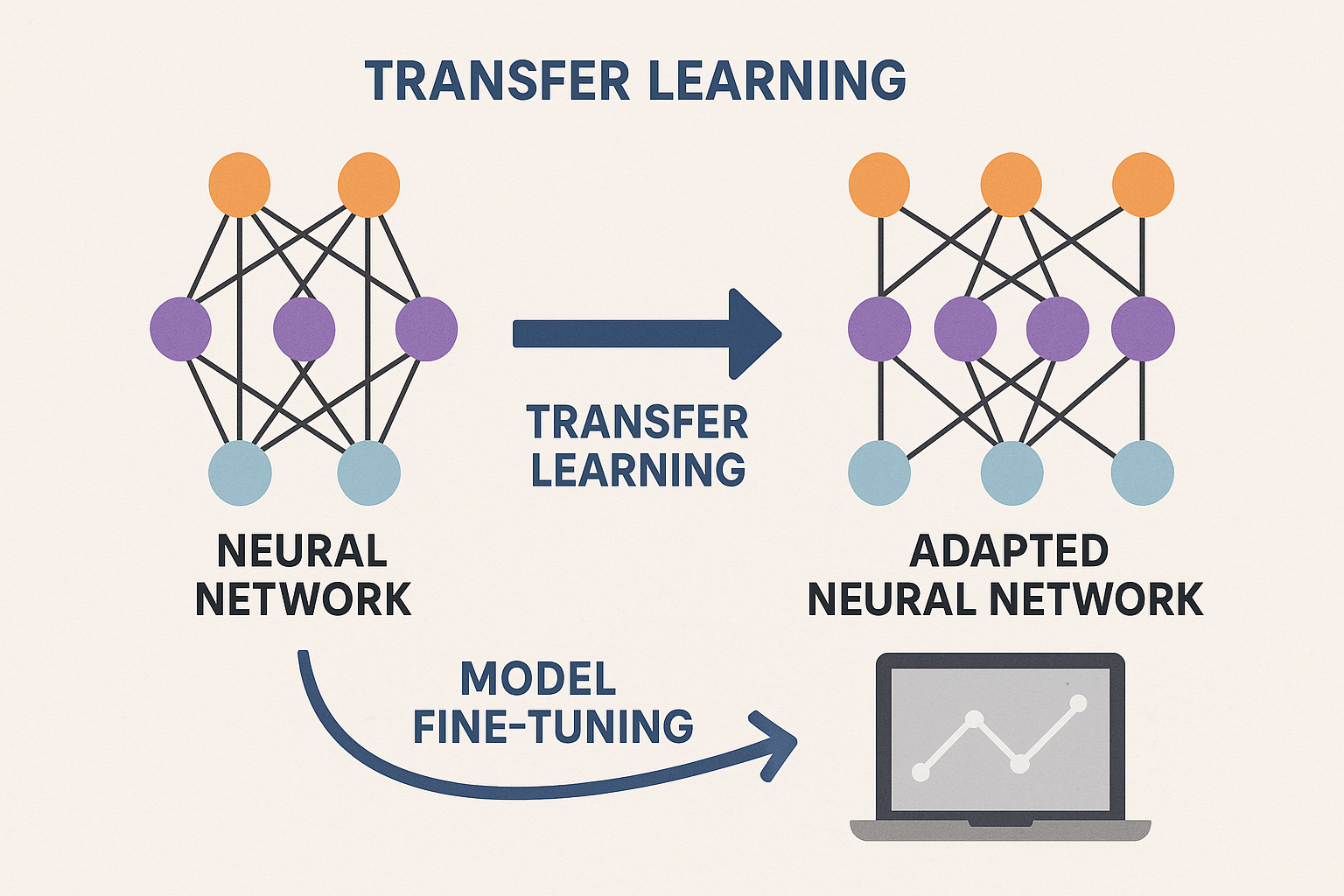

Pretrained LLMs are generalists. Fine-tuning makes them specialists. Adapt to your domain, your style, your task.

When prompting isn’t enough.

When to fine-tune

Fine-tune when:

- Specific output format needed consistently

- Domain-specific knowledge required

- Particular style or tone

- Better than prompting for your use case

Don’t fine-tune when:

- Few examples available (try few-shot)

- Task is simple (prompting works)

- Data is sensitive (can leak in weights)

Interactive demo: Fine-Tuning Animation

Full fine-tuning

Update all parameters on your data.

from transformers import Trainer, TrainingArguments

training_args = TrainingArguments(

output_dir="./results",

num_train_epochs=3,

per_device_train_batch_size=4,

learning_rate=2e-5,

weight_decay=0.01,

)

trainer = Trainer(

model=model,

args=training_args,

train_dataset=train_dataset,

)

trainer.train()

Pros: Maximum adaptation Cons: Expensive, needs full model storage per task

LoRA (Low-Rank Adaptation)

Instead of updating all weights, add small trainable matrices.

$$W’ = W + \Delta W = W + BA$$

Where B and A are low-rank (r « hidden_size).

from peft import LoraConfig, get_peft_model

config = LoraConfig(

r=16, # rank

lora_alpha=32, # scaling

target_modules=["q_proj", "v_proj"],

lora_dropout=0.05,

)

model = get_peft_model(model, config)

# Now only ~1% of parameters trainable

Pros: Efficient, multiple adapters per base model Cons: Slightly less expressive than full

QLoRA

Quantized LoRA. Base model in 4-bit, adapters in fp16.

from transformers import BitsAndBytesConfig

bnb_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_quant_type="nf4",

bnb_4bit_compute_dtype=torch.bfloat16,

)

model = AutoModelForCausalLM.from_pretrained(

"llama-7b",

quantization_config=bnb_config

)

Fine-tune 7B model on single GPU!

Dataset preparation

Format: instruction + response pairs (or conversations)

{"instruction": "Summarize:", "input": "Long article...", "output": "Summary..."}

{"instruction": "Translate to French:", "input": "Hello", "output": "Bonjour"}

Quality > quantity. 1000 high-quality examples often enough.

Training tips

Learning rate: Much lower than pretraining (1e-5 to 5e-5)

Epochs: Few (1-5). More risks overfitting.

Batch size: As large as fits in memory (gradient accumulation helps)

Warmup: Start learning rate low, ramp up

Regularization: Weight decay, dropout

Catastrophic forgetting

Fine-tuning too much: model forgets pretraining.

Solutions:

- Early stopping

- Lower learning rate

- Mix in pretraining data

- Use LoRA (original weights frozen)

Evaluation

- Perplexity: On held-out data

- Task metrics: Accuracy, F1, etc.

- Human eval: Does it actually work for your use case?

Don’t trust loss alone. Evaluate on real examples.

Fine-tuning explained! Star ML Animations and share this practical guide with your ML team!