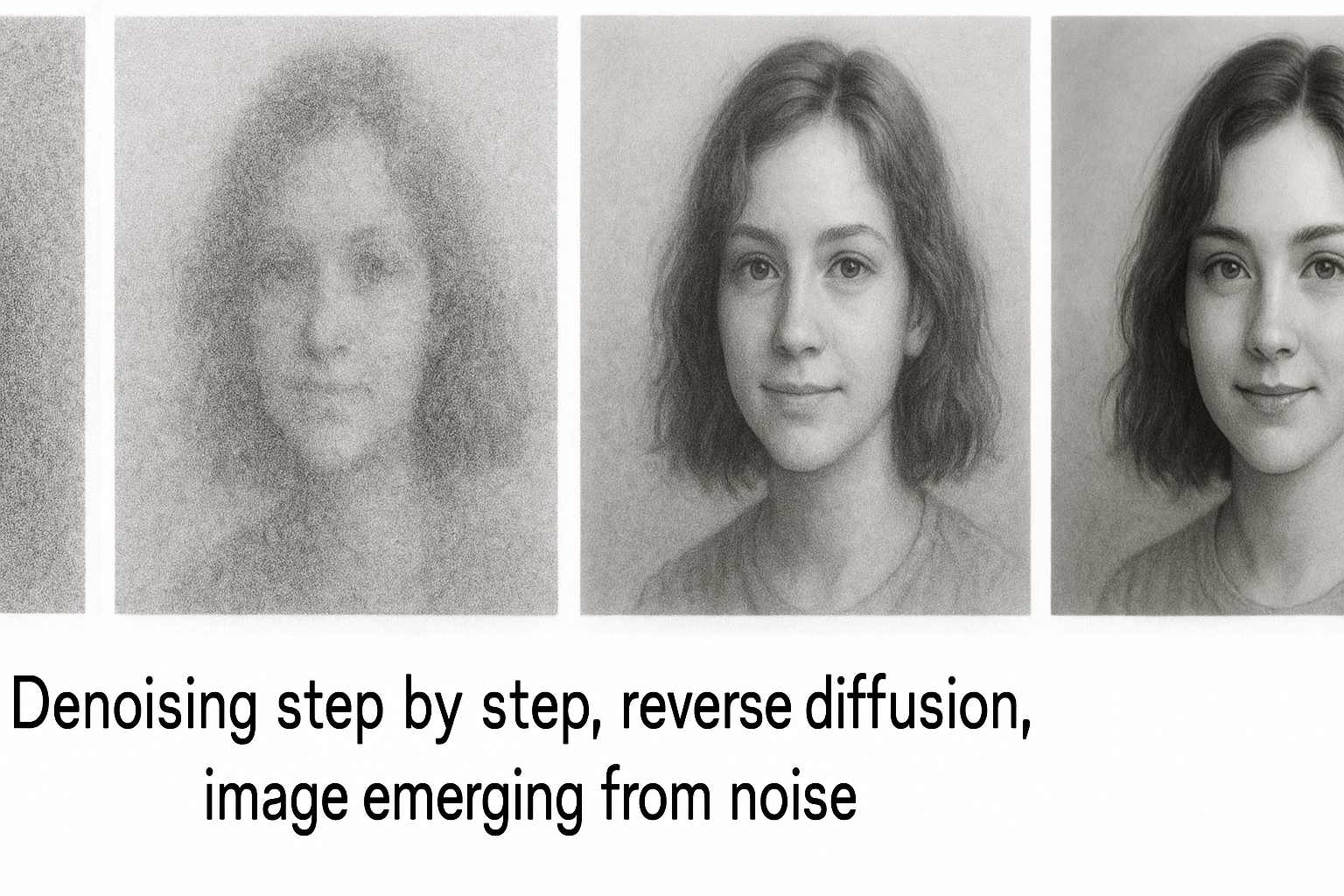

Diffusion’s key insight: destroying structure is easy (add noise), creating structure is hard. Learn to reverse the easy process → generate from scratch.

Part 3 of 7 in the Diffusion Models series.

Forward process

Gradually add Gaussian noise over T timesteps:

$$q(x_t | x_{t-1}) = \mathcal{N}(x_t; \sqrt{1-\beta_t}x_{t-1}, \beta_t I)$$

β_t is noise schedule. Small values (0.0001 to 0.02).

After T steps (T~1000), image becomes pure noise.

Interactive demo: Flow Matching Animation

Closed-form sampling

Don’t need to iterate. Sample x_t directly from x_0:

$$q(x_t | x_0) = \mathcal{N}(x_t; \sqrt{\bar{\alpha}_t}x_0, (1-\bar{\alpha}_t)I)$$

Where $\bar{\alpha}t = \prod{s=1}^t (1-\beta_s)$

def q_sample(x_0, t, noise=None):

if noise is None:

noise = torch.randn_like(x_0)

sqrt_alpha_bar = sqrt_alpha_bar_schedule[t]

sqrt_one_minus_alpha_bar = sqrt_one_minus_alpha_bar_schedule[t]

return sqrt_alpha_bar * x_0 + sqrt_one_minus_alpha_bar * noise

Single operation: weighted sum of original image and noise.

Noise schedules

Linear: β increases linearly

betas = torch.linspace(0.0001, 0.02, T)

Cosine: Better for high resolution

def cosine_schedule(t, T, s=0.008):

f_t = np.cos((t/T + s)/(1+s) * np.pi/2)**2

return 1 - f_t / f_0

Schedule choice affects generation quality significantly.

Computing the schedule

def get_schedule(betas):

alphas = 1 - betas

alpha_bar = torch.cumprod(alphas, dim=0)

sqrt_alpha_bar = torch.sqrt(alpha_bar)

sqrt_one_minus_alpha_bar = torch.sqrt(1 - alpha_bar)

return sqrt_alpha_bar, sqrt_one_minus_alpha_bar

Reverse process

Learn to undo the noise:

$$p_\theta(x_{t-1}|x_t) = \mathcal{N}(x_{t-1}; \mu_\theta(x_t, t), \Sigma_\theta(x_t, t))$$

Neural network predicts mean (and optionally variance).

What does the network predict?

Three equivalent parameterizations:

1. Predict x_0: Network outputs: “What was the original clean image?”

2. Predict ε (noise): Network outputs: “What noise was added?” Most common. Simpler loss.

3. Predict v: $$v = \sqrt{\bar{\alpha}_t}\epsilon - \sqrt{1-\bar{\alpha}_t}x_0$$ Better for some applications.

Noise prediction loss

$$\mathcal{L} = \mathbb{E}{t, x_0, \epsilon}\left[|\epsilon - \epsilon\theta(x_t, t)|^2\right]$$

Simple MSE between true noise and predicted noise.

def diffusion_loss(model, x_0, t):

# Sample noise

noise = torch.randn_like(x_0)

# Get noisy image

x_t = q_sample(x_0, t, noise)

# Predict noise

predicted_noise = model(x_t, t)

# MSE loss

return F.mse_loss(predicted_noise, noise)

Training loop sketch

for batch in dataloader:

# Sample random timesteps

t = torch.randint(0, T, (batch_size,))

# Compute loss

loss = diffusion_loss(model, batch, t)

# Update

optimizer.zero_grad()

loss.backward()

optimizer.step()

Intuition

t=0: Image clean, no noise to predict → trivial t=T: All noise, original image gone → predict from prior Middle t: Partial noise → use image structure + noise statistics

Network learns different denoising strategies for different noise levels.

Noise schedules make sense? Give ML Animations a ⭐ and spread the word about this diffusion series!