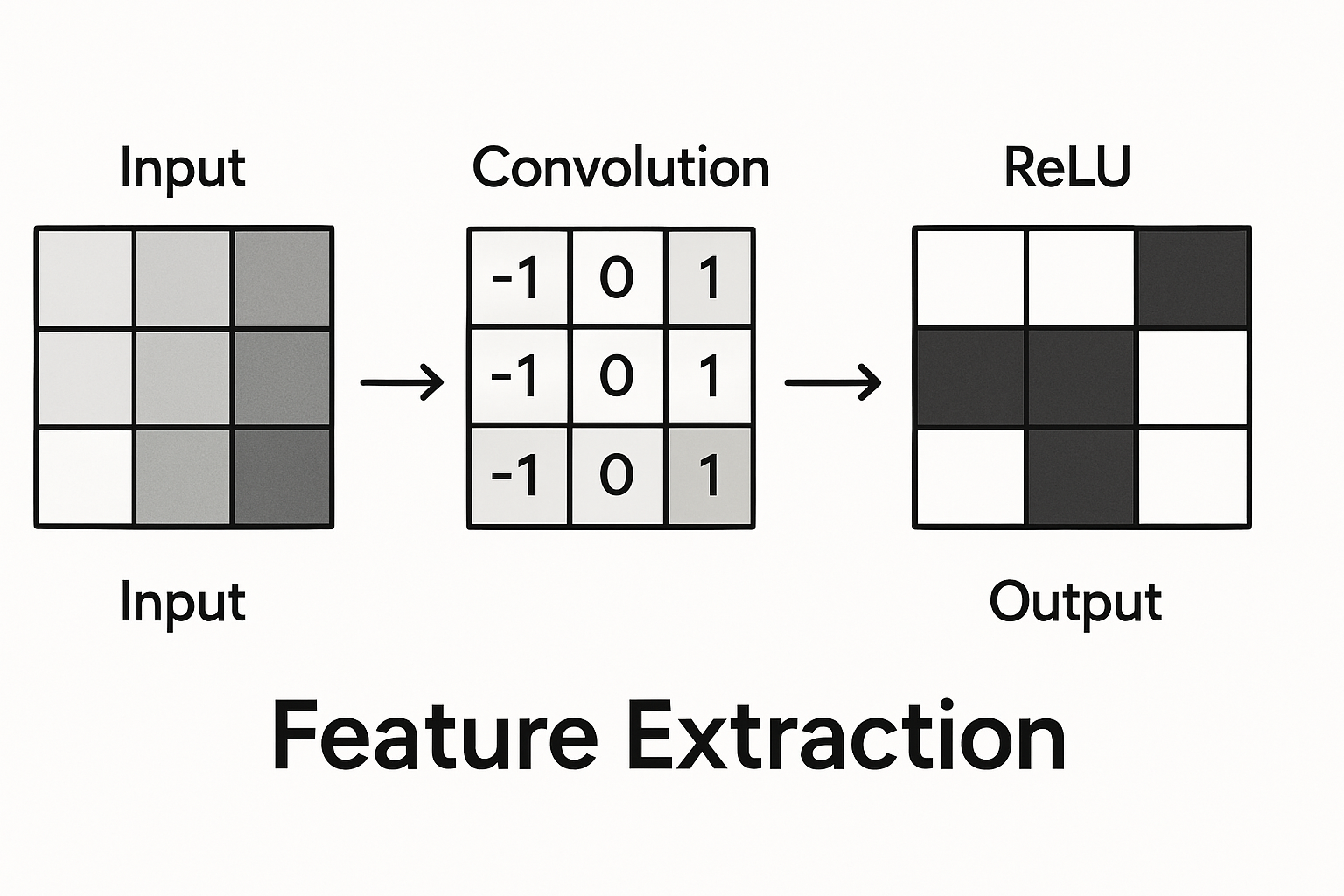

Convolution followed by ReLU. This pattern repeats hundreds of times in modern image networks. Simple but there’s a reason it works.

The pattern

# Basic building block

conv = nn.Conv2d(in_channels, out_channels, kernel_size=3, padding=1)

relu = nn.ReLU()

output = relu(conv(input))

Conv extracts features. ReLU adds nonlinearity.

Interactive demo: Conv-ReLU Animation

Why ReLU after conv?

Convolution alone is linear. Stack of linear operations = one linear operation. No point going deep.

$$\text{Conv}_2(\text{Conv}1(x)) = \text{Conv}{combined}(x)$$

ReLU breaks linearity. Now deeper = more powerful.

What actually happens

- Conv: Weighted sum of local region. Detects patterns.

- ReLU: Keeps positive activations, zeros negative ones.

The “feature map” after ReLU shows where patterns were detected.

Negative activation = “not this pattern” Positive activation = “yes this pattern, this strongly”

Adding batch norm

Modern networks add batch normalization:

# Conv → BatchNorm → ReLU

nn.Sequential(

nn.Conv2d(64, 128, 3, padding=1),

nn.BatchNorm2d(128),

nn.ReLU()

)

Or sometimes:

# Conv → ReLU → BatchNorm

nn.Sequential(

nn.Conv2d(64, 128, 3, padding=1),

nn.ReLU(),

nn.BatchNorm2d(128)

)

Both work. First is more common.

In ResNet

ResNet uses this in blocks with skip connections:

class ResBlock(nn.Module):

def __init__(self, channels):

super().__init__()

self.conv1 = nn.Conv2d(channels, channels, 3, padding=1)

self.bn1 = nn.BatchNorm2d(channels)

self.conv2 = nn.Conv2d(channels, channels, 3, padding=1)

self.bn2 = nn.BatchNorm2d(channels)

def forward(self, x):

residual = x

out = F.relu(self.bn1(self.conv1(x)))

out = self.bn2(self.conv2(out))

out += residual # skip connection

out = F.relu(out)

return out

Note: ReLU after addition, not before.

What features look like

Early layers: edges, colors, simple textures Middle layers: parts, patterns, shapes Late layers: objects, concepts

ReLU essentially creates “feature detectors” that fire (positive) or don’t (zero).

Alternatives to ReLU

Same pattern works with other activations:

nn.Conv2d(...),

nn.LeakyReLU(0.1) # handles dead neurons

nn.Conv2d(...),

nn.GELU() # smoother, used in newer architectures

nn.Conv2d(...),

nn.SiLU() # Swish, self-gated

But ReLU still default for most CNN applications.

Conv + ReLU pattern locked in? Show support by starring ML Animations and sharing on your social channels!